Since I arrived at Canberra Grammar School, an LMS transition seemed to be on the cards. Engagement with Blackboard, the incumbent system, was low and anecdotal reports suggested disatisfaction.

A project with process

I had worked on a number of large projects based around an EdTech Project Management Process, based on PMBOK, and in 2018 the go-ahead was given for an LMS project. It was estimated that a proper transition would take around two years, which was an unprecedented length for an IT project in the School, but we were going to do it right.

Determining the need for change

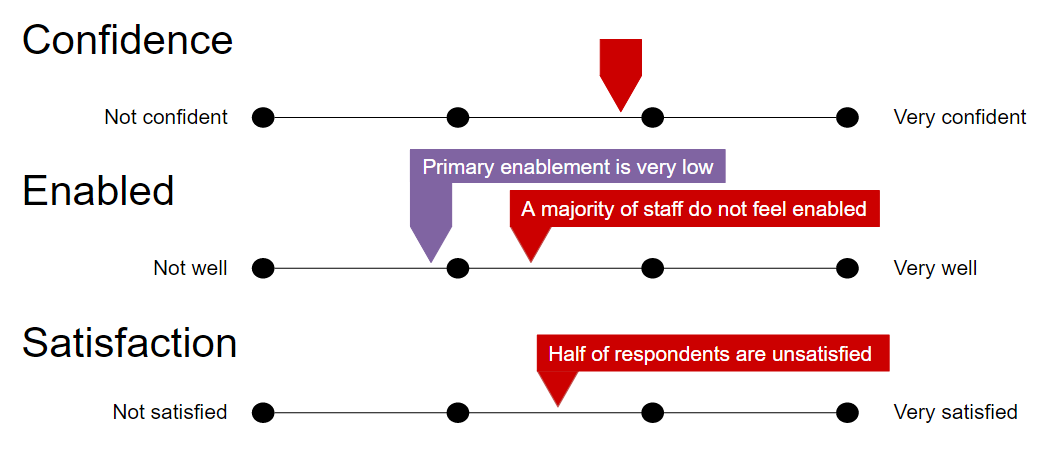

The first step was determining the need for change. Before we could commit to an expensive change, we wanted to objectively know it was necessary. At CGS, the LMS is used for three main purposes: as a learning environment, as a means of making announcements and as a portal to access other systems. A survey was created to gauge whether users felt confident, enabled and satisfied with the incumbent system across these purposes. We also asked users to identified how they were enabled and blocked in their use. The survey was voluntary but yielded a 61% response rate, which allowed confidence in the results. In relation to use as a learning environment, the findings showed users were confident, but most did not feel enabled and half were not satisfied. Similar results were found for other purposes of the system.

The initial attitudinal survey suggested that a change was warranted and identified a number of deficiencies to overcome in a replacement, such as the interface and ease of use.

Planning

Driving Questions

With a project determined as necessary, planning began. Driving questions had to be answered to identify the, mostly pedagogical needs, which included an improved online teaching environment, refined flows of assessment and reporting information, the potential to collect data for analytics, a focused communication system and a portal to link to other systems.

Coordination

Some history of the previous system was compiled for context and a rough schedule was started.

Consultation

The people who were going to be involved in the project were identified including:

- A Project Leader and Data Owner (an executive responsible for strategic change and respected teacher),

- A Consultative Committee (the standing EdTech Committee),

- A Change Manger (myself) and

- A broad range of Stakeholder Groups.

A RACI Matrix was drawn up to ensure the project members (and implicitly others involved), new what level of responsibility they had in the project.

Because the project was going to affect many users, the stakeholder groups consisted of a broad range of staff (Primary and High School teachers, pastoral leaders, executives, communications and support staff), students and parents, forming nine groups in all.

Scope

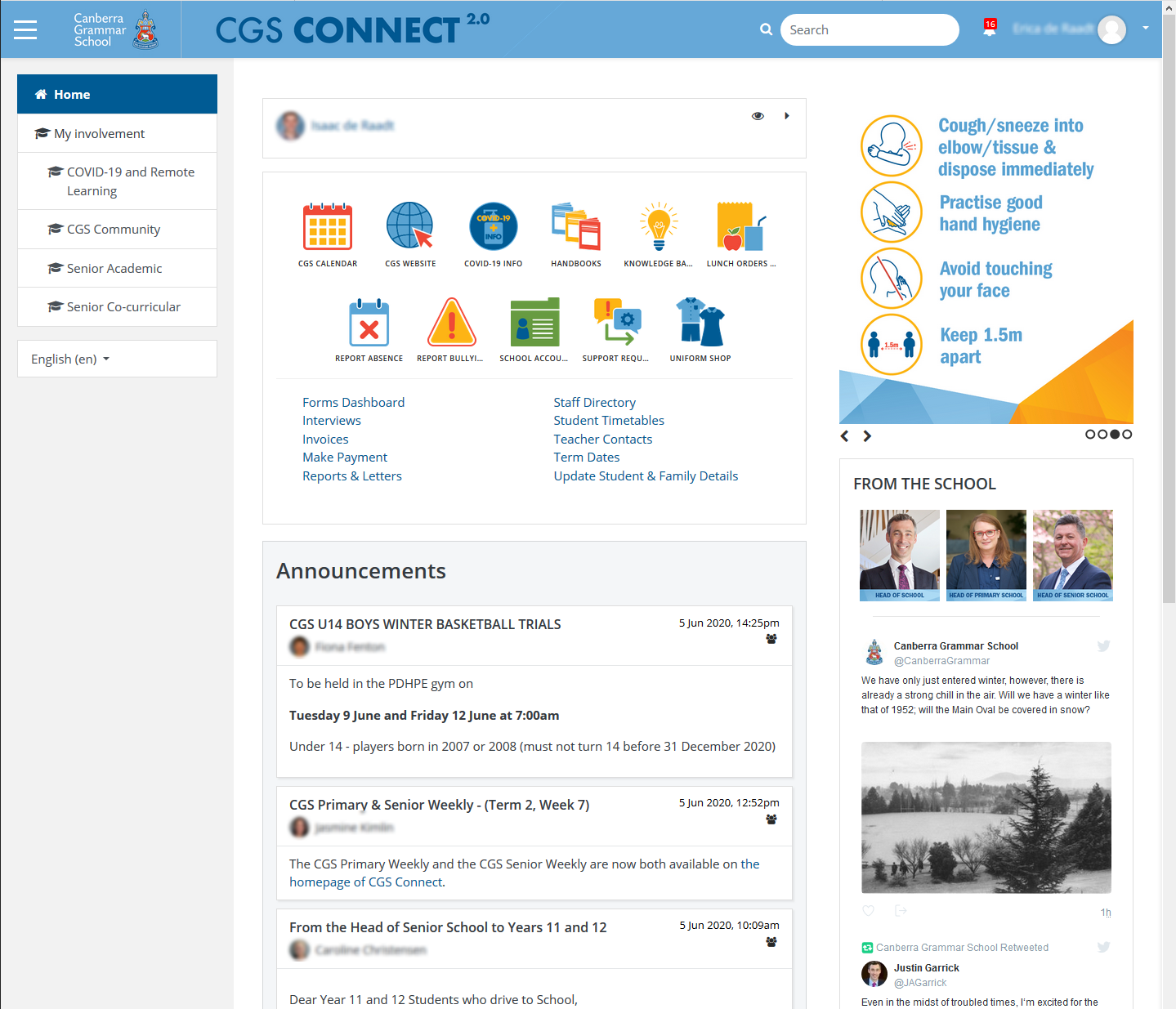

In the case if this project, it was important to identify the parts of the greater system that was being reviewed. The branding we use for the system “CGS Connect”, the consistent theming across systems and the transparent linking between systems meant that the boundary between the system were not obvious to all users, including executive staff. Some areas outside the system were thought by some to be in need of change, so limiting the scope to LMS, announcements and portal allowed the project to focus on a single system change.

With the scope set, it was possible to define the deliverables and objectives of the change and to describe exclusions from the change.

Time Management

The earlier rough schedule was further developed with dependencies added. Stages like Migration and the creation of new courses were added. A period of longevity of 3 to 5 years was suggested before a subsequent review.

Cost Management

Before starting to look at alternatives, it was worth defining a budget for the project. The main differentiation was going to be whether we paid for an externally hosted system or hosted our own, with customisations and development.

- Staff time costs were going to be significant for training. If we were going to make customisations then time of development staff time would be needed.

- Migration would be a large up-front cost and some budget was set aside for consultation.

- Depending on the system chosen, ongoing costs could vary, but an estimated budget was set to allow system costs to be compared against it. If the system was self hosted, those ongoing costs would be incurred locally as opposed to paying them to an organisation hosting the system externally.

Quality Management

Before starting a change, we set a number of quality measures were considered. The reason for doing this before starting was to allow for benchmark measurements to be made of the incumbent system. Some of the measures related to system use that could be counted or timed and some related to users’s attitudes, which would come through user testing and surveys.

A list of risks was drawn up, each with an estimate of probability and means of mitigation.

- Development overrun

- Migration overrun

- Resistance to adoption

- Focus on other projects

Considering these risks early was useful as each of them became relevant at stages of the project. It was good to have them stated in the plan and known to executives, so the project could be backed and prioritised when needed.

Communications Plan

Being a significant change, there was a long list of messages that needed to be communicated. Each message was defined with rough content, who would be responsible for sending and who would the recipients be and when the messages would be sent. The key goal of communications was to preempt the change in the minds of users and develop a sense of ownership for the new system.

Messages included notifications of coming change, invitations to be involved, demonstrations of functionality and project status reports. Recipients included various groups including staff, students and parents at various stages of the project. Messages were delivered by announcement email, at meetings and through written reports.

Procurement

With initial planning out of the way, the bulk of remaining planning time was spend comparing alternatives. The goal was to allow stakeholders to provide input from their perspective and feel they had contribute to the choosing of a new system and ultimately ownership of that system.

Sessions were held with each of the stakeholder groups. Based on an extensive list of possible LMS features, stakeholder groups collectively identified and prioritised requirements and each groups’ requirements were amalgamated. We used an electronic survey that allowed people to designate each of 89 possible requirements as “Unnecessary”, “Useful sometimes”, “Often useful” or “Essential”. Comments and general feedback were also collected for later consideration.

Each response was then given a weighted value with values averaged within groups and then across groups, giving each stakeholder group equal representation. The top 30 requirements became the criteria for comparing alternative systems.

Top 30 Requirements (by importance)

- Obvious, consistent navigation

- Search functionality

- Single sign-on integration

- Usable on small screens/touch screens

- Ability to attach/link to files to announcements

- Mobile notifications for announcements

- Linking to Google Drive files

- A tool for promoting School events

- Ability to use system when Internet is unavailable

- Up-to-date class/group enrolment information

- Greater control over announcement formatting

- Context specific landing page for different users

- Sharing sound/photos/videos

- A means of communicating with staff

- A means of parent communication

- Drag-and-drop resource sharing

- Linking to/embedding web pages

- Ability to elect announcement audience in granular way

- Accessibility aids for visually impaired

- Online archive of announcements

- Dedicated mobile app for activities

- Scheduling of future announcements

- A means of communicating with groups of students

- Understanding of timetables

- Multi-language support

- Surveys/forms to gather student feedback

- Integration with School calendars

- Ability to re-use content

- Linking to Google Docs, Sheets, etc

- Guest access for parents and other staff

There were numerous alternatives available. Armed with users’ criteria a number of systems were able to be eliminated through a lack of numerous necessary features. Other shortlisted systems were then scored numerically against the criteria, a process that required lengthy investigation and consultation with vendors.

For each criterion, a value was given as follows.

- 3 for a mature feature meeting the criterion

- 2 for partly meeting the criterion

- 1 for hard-to-apply or unproven against the criterion

- 0 for features absent or not advertised

Values were then weighted against the inverse value of the criterion (30 points for the highest priority down to 1 for the lowest) and then summed, leading to the following scores.

| 1891 |

| 2192 | |

| 2198 |

| 2401 | |

| 2930 |

| 3160 | |

| 3161 |

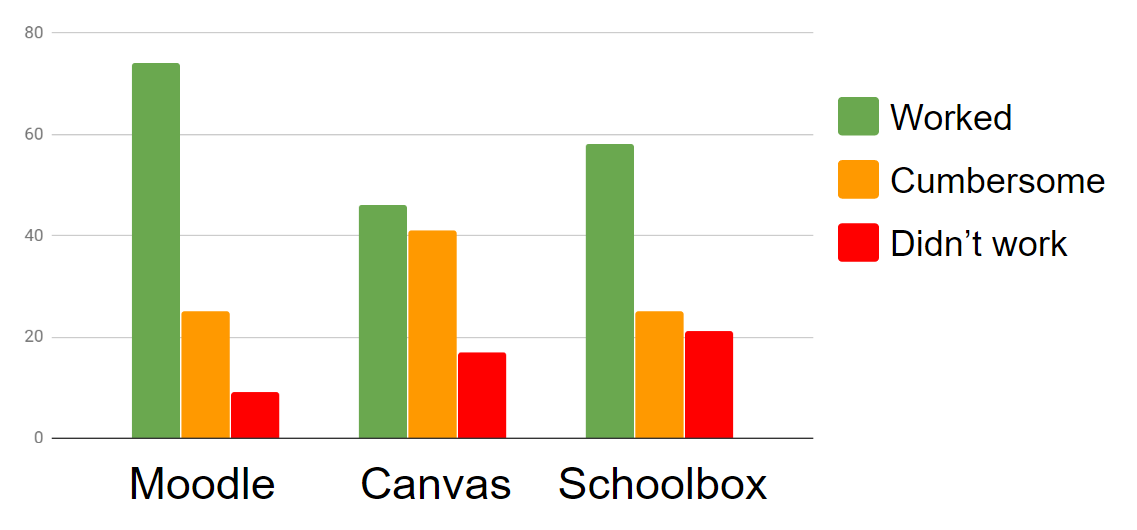

The three highest-scoring systems, Canvas, Schoolbox and Moodle, were selected for trialing. The challenge was to create an objective trial that would allow users to select a system to implement.

Technical criteria were defined and including integration with our pre-existing systems and the ability to theme and organise spaces in the system. Each of the trailed systems was able to accommodate these criteria to varying degrees. Some needed to be judged against the technical criteria through trialing.

Trailing – A blind taste test

An instance of each of the three systems was established and populated with representative course content. Each system was themed with School branding and obvious system naming was avoided to attempt blind evaluations.

Test scripts were created that covered most of the criteria, some tests combining multiple criteria. Test steps were defined for each of the three systems in an electronic form that led the user through the test. Staff and parent representatives were invited to complete a relevant test across each system and to rate their experience with the systems as “Worked”, “Worked, but was cumbersome” or “Didn’t work” together with free-form comments. Over 100 tests were completed by users leading to a solid set of opinions with Moodle proving most functional, followed by Schoolbox and Canvas.

Students were involved in the trials by another method. A group of Primary students and another group of High School students, who each were actively involved in computer-related activities, were given the opportunity to experience each system and run through some tests. A selection of the volunteers was interviewed through a talk-aloud process across the systems while observing their actions as they used each system. Students seemed happy to explore the systems freely with aspects of colour and imagery shown to be attractive to students, influencing the formation of their opinions about the functionality of the systems, sometimes in a manner that contradicted the difficulties they experienced. This was noted for the later implementation.

Technical criteria were also applied to each system and Moodle was seen to be the best technical fit for the School. The School’s executive also appreciated the potential to customise the LMS in a way that would set the School apart, while understanding the cost and risks associated with hosting an open source system.

Based on scoring against users’ criteria, blind testing, technical criteria and the blessing of executives, Moodle was chosen as the system to implemetn.

Implementation

With a system chosen, implementation began, involving setup, configuration and customisation. A test and production instance were established so changes could be tested before being deployed. I will create another post or two describing how we have set up our Moodle instance in detail, so others can benefit from that experience.

In summary, choosing to implement and host an LMS meant that more work would need to happen locally, rather than relying on outside help. It was worth doing, but it was a challenge.

Roadshows and Piloting

With a transition at the turn of the year, as well as creating our customisation, we had six months to mentally prepare users for change. A Roadshow of demonstrations was established at weekly staff meetings to demonstrate functionality and develop enthusiasm. Volunteers from different parts of the School were given spaces for teaching, which also helped refine the organisation and configuration of the system.

Communications were send out through various means to inform the community about the system and the coming change.

Migration

In order to minimise disruption to teaching, content from the previous system needed to be migrated. Automated methods proved flawed and resulted in courses that did not resemble the source, so it was determined that manual migration work would be needed. Assistance was sought from outside organisations. One organisation pulled out at the last minute and additional assistance was found in private individuals. It was later found that the second outside organisation was delegating migration work to inexperienced users, creating work that later had to be repeated. The aim of completing the migration before the first training sessions was not met and migration activities continued over the end-of-year break.

Training

Fighting for time with teachers proved difficult. Before the end-of-year break a training session was conducted with the entire staff, which was counter-productive as users had many perspectives and some did not have migrated content to work with. With lessons learned, training after the break, before the return of students, was conducted with smaller, focused groups and proved reasonably successful. A series of subsequent voluntary CPL sessions was interrupted by the advent of COVID-19.

End Results

We’re now winding down the project, but planning to make continual improvements. When our School closed due to the COVID-19 pandemic and regular learning became Remote Learning, the recent training for teachers meant they were more prepared than they would have been if we hadn’t recently transitioned. As the system became the primary modality for teaching, engagement in the system increased dramatically.

We have achieved almost all the distinctive criteria in the new system, which will hopefully achieve more from the School than other systems would have.

3 thoughts on “An LMS Transition Project”